SMT 00.5.70 Released!

by Michal Tinthofer on 17/04/2019New version of SMT is now available, you can have a look on the summary of changes.

Read moreSometimes is nice to have a tool/report which could allow you to see how much your backup storage is degraded over time. Especially by fragmentation and auto growth/shrink operations. But it often requires extensive spending of administrators’ time to setup baseline monitoring, collecting data and also analyzing them. I founded one useful way how to save your time.

It also has some prerequisites. I assuming you are not cleaning up your backup history table (as usual :) ) and backing up your databases to storage which hold some database files. So it is mainly useful in smaller environments with smaller storage where data files are sharing same DAS or centralized SAN solution with shared raidgroup.

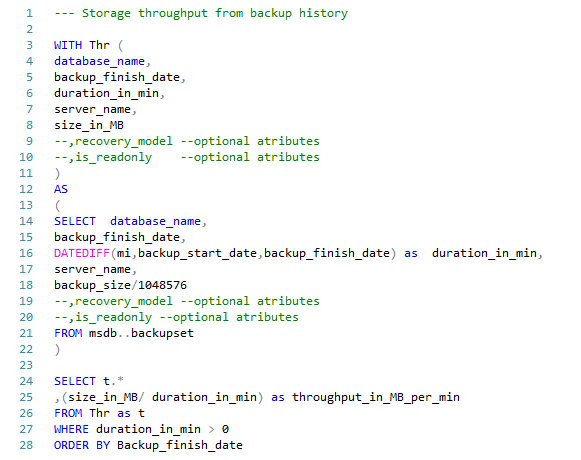

Then you can use my script to extract that information:  StorageThr.sql

StorageThr.sql

As a result you will get a list of values with a throughput_in_MB_per_min column per every backup made. Then it can be exported to the reports or graphs like this one:

Michal Tinthofer is the face of the Woodler company which (as he does), is fully committed to complete support of Microsoft SQL Server products to its customers. He often acts as a database architect, performance tuner, administrator, SQL Server monitoring developer (Woodler SMT) and, last but not least, a trainer of people who are developing their skills in this area. His current "Quest" is to help admins and developers to quickly and accurately identify issues related to their work and SQL Server runtime.

New version of SMT is now available, you can have a look on the summary of changes.

Read moreIn high-performance SQL Server environments, how you "slice" your CPU resources is just as important as how many cores you have. We recently tackled a case where a customer was plagued by high SOS_SCHEDULER_YIELD and CXPACKET waits.

Read more