Live Query Tuning

by Michal Kovaľ on 05/09/2024During last SQL Server Bootcamp 2024 in Brno - our colleague Jan Repka presented a live query tuning session.

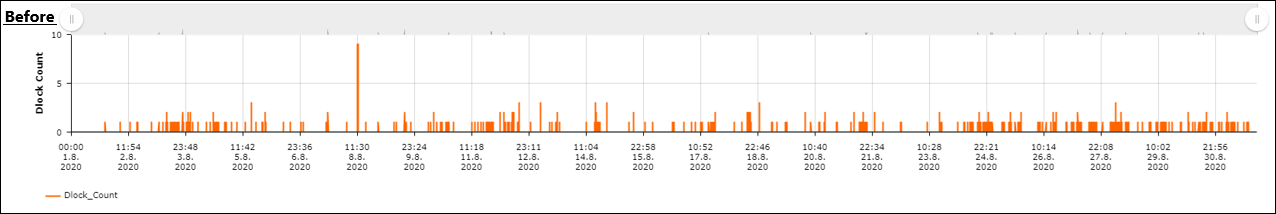

Read moreIn high-concurrency environments, deadlocks are more than just technical glitches...they are silent performance killers. Recently, our team tackled a significant challenge on a SQL Server 2019 instance hosting a Camunda-based workflow application. The issues were primarily localized in the process timing database, specifically during critical application operations like stopping workflows.

The Anatomy of the Conflict

Our initial investigation identified the "battleground" within Camunda’s core runtime and history tables, such as ACT_HI_ACTINST (Historic Activity Instance) and ACT_RU_EXECUTION (Runtime Execution). The deadlock patterns revealed a classic struggle between UPDATE operations on history and INSERT operations into runtime tables.

The main hurdles identified:

The Road to Stability: A Three-Phase Approach

We didn't just apply a "quick fix." We followed a methodical tuning strategy:

Phase 1: Data Hygiene (The Cleanup) You cannot tune an engine clogged with debris. We prioritized the cleanup of the massive history tables. Once the data volume was reduced, the true patterns of the deadlocks became much clearer.

Phase 2: Orchestrating Maintenance To eliminate resource contention, we rescheduled the SMT_IndexOptimize jobs to the lowest-usage windows (01:00 AM and 03:00 AM). Furthermore, we implemented a "hard stop" at 06:00 AM to ensure that maintenance tasks never bleed into daily production traffic.

Phase 3: Targeted Indexing Analysis of the deadlock XMLs highlighted a recurring culprit: DELETE FROM ACT_RU_EXECUTION WHERE ID_ = @P0 AND REV_ = @P1 The engine was struggling because no single index covered both the ID_ and REV_ columns. By creating a composite index on (ID_, REV_), we allowed the SQL Server to locate and lock rows instantly, drastically reducing the "lock-hold" time.

The Impact: Data-Driven Success

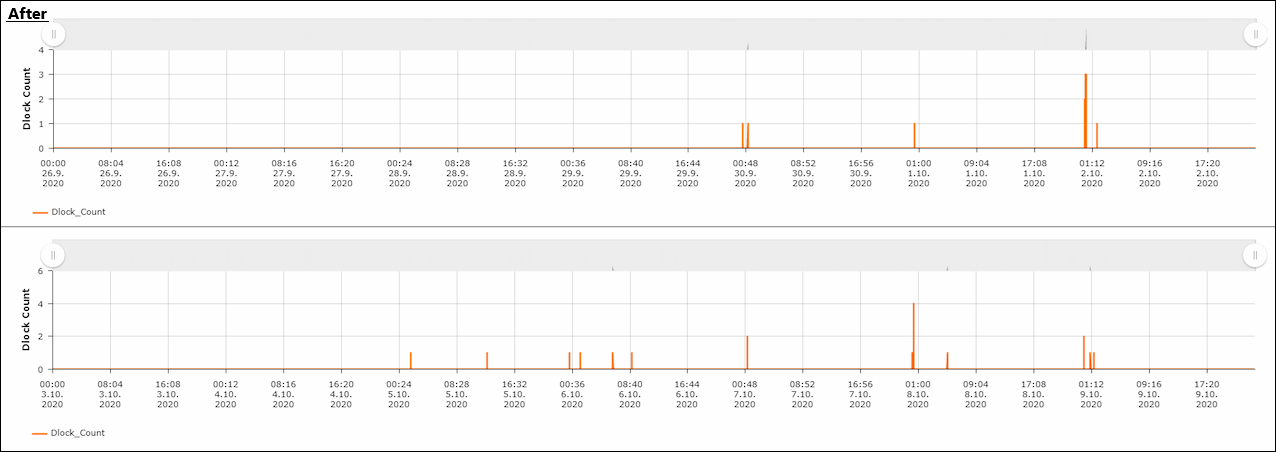

After deploying these changes and tracking them through our monitoring tools, the results were definitive. While minor deadlocks occasionally occur during intensive night maintenance, the volume of major application-level blockages has been reduced by approximately 93%.

Lessons for DBAs

Resolving deadlocks is rarely about a single "magic" query. It’s about the synergy between data volume management, smart scheduling, and precision indexing. Our next steps involve monitoring other workflow-related databases to proactively apply these lessons before issues arise.

Michal is a technically proficient SQL Server Specialist with a proven track record in resolving incidents and implementing changes within large-scale database infrastructures, ensuring maximum availability of services. Concurrently, as a Digital Content and Marketing Specialist, his priority is building strong online brand identities through strategic communication and creative storytelling. He consistently seeks new ways to enhance digital interaction, believing quality digital communication is key to success in today's connected world.

During last SQL Server Bootcamp 2024 in Brno - our colleague Jan Repka presented a live query tuning session.

Read moreIn the world of database administration, index maintenance is a necessary evil. Done right, it keeps your queries snappy; done wrong, it becomes a resource-hungry monster that eats your maintenance window alive.

Read moreIn high-performance SQL Server environments, how you "slice" your CPU resources is just as important as how many cores you have. We recently tackled a case where a customer was plagued by high SOS_SCHEDULER_YIELD and CXPACKET waits.

Read more